The Electrical Grid Resilience and Assessment System (EGRASS) is a complex web application that visualizes the effects of hurricanes on the Puerto Rican power grid.

For my Senior Capstone project, I collaborated with the Pacific Northwest National Laboratory to redesign EGRASS to better suit the needs of climate scientists and governement agencies.

My role

-UX Designer & Researcher on a 4-person student design team

-User Research, UX & UI Design, prototyping

Results

-Redesigned web app currently in use by the Puerto Rico Energy Power Authority and LUMA Energy

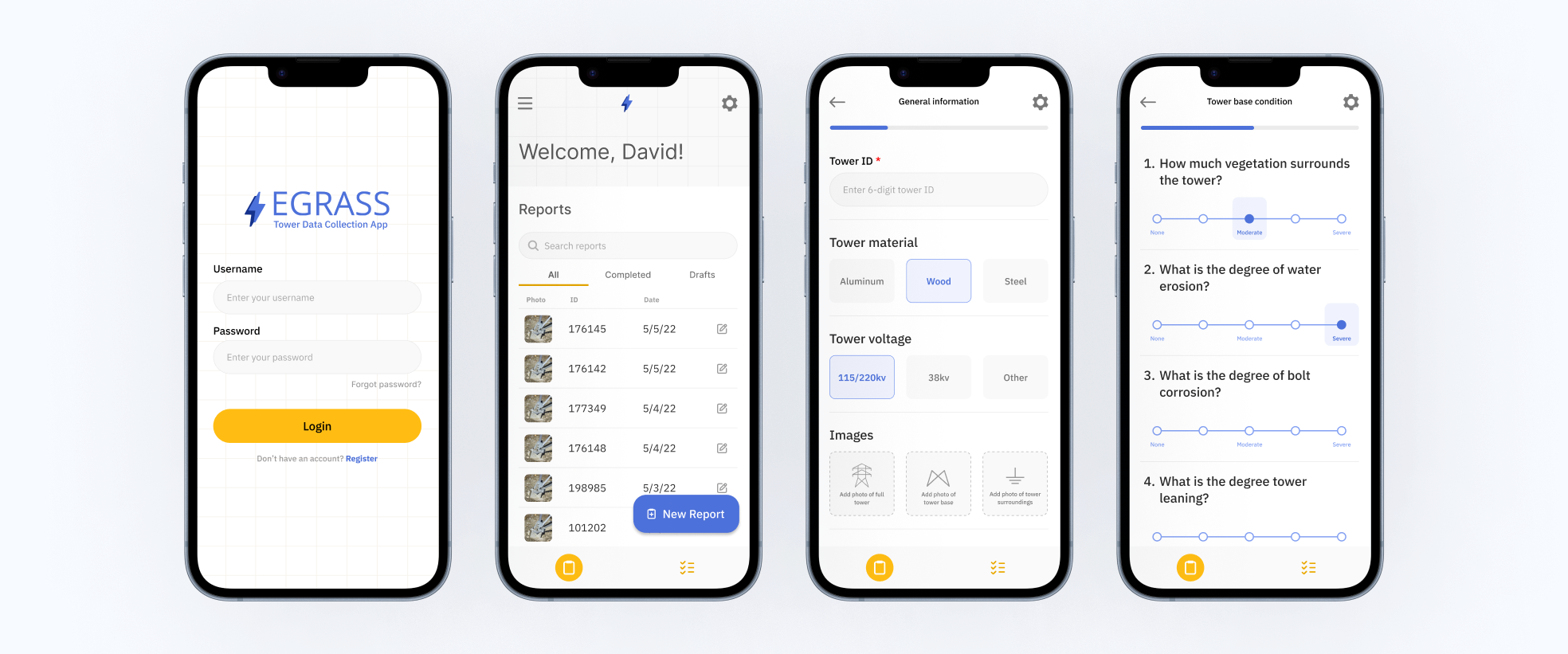

-Developed mobile app for recording electrical tower condition

Unpredictable storms and an unreliable power grid are a recipe for disaster. EGRASS allows scientists to predict damage to the power grid through storm simulations.

The Puerto Rican power grid is extremely vulnerable during storm events. The Pacific Northwest National Laboratory (PNNL) developed EGRASS to visualize the effects of storms on the power grid to enable power companies to share accurate storm and recovery information with government officials.

However, the first iteration of EGRASS received harsh pushback from users due to its poor usability and misalignment with user needs. Moreover, EGRASS lacked the electrical tower condition data needed to visualize storm damage, rendering it useless in real-world contexts.

The EGRASS interface needed to better match user goals. And it needed real data.

Two design challenges emerged: (1) Redesign the EGRASS module to better address user needs. (2) Design a data collection system for tower technicians to record electrical tower condition across Puerto Rico.

Bonus Challenge: My team started 12 weeks late on this project due to information security hurdles. We weren’t able to access EGRASS for the first few weeks of the our project, a rough start to an otherwise great experience!

We developed a four phase design plan to fill in the gaps of existing user research and redesign EGRASS.

1. Design Review to…

- Develop a baseline knowledge of applications with non-linear task flows

- Understand the current state of EGRASS

2. User Research to…

- Understand user goals, requirements, and pain points

- Generate themes describing user experiences

- Establish design requirements for EGRASS and a data collection tool

3. Design and Prototyping to…

- Ideate solution that address the unique user groups

- Create wireframes and mid-fidelity prototypes for EGRASS and a data collection mobile app

4. Feedback and Iteration to…

- Confirm feasibility of our solution with PNNL developers

- Establish a prototype user testing plan for deployment in Puerto Rico

Complex application task flows differ from traditional consumer apps. We needed to understand these differences before redesigning EGRASS.

We started with a literature and design review to familiarize ourselves with complex application design in simulation contexts and examine the current state of EGRASS. Developing a shared understanding of best practices of hyper field-specific tools enabled the design team to assess and identify areas of improvement to be further investigated in user research.

After reviewing past user research and the EGRASS interface, we identified several gaps:

- How do goals differ between user groups (government officials, scientists, disaster relief manager, etc.)?

- What type of data do users need to see for their specific roles?

- What type of electrical tower data is necessary for accurate storm damage simulation?

In all of our interviews, users emphasized the importance of viewing different types of data at different stages of storm response.

We interviewed several EGRASS stakeholders including LUMA and Quanta Power grid operators and tower technicians. Power grid operators were selected as they could give us insight into the data they need to make storm response decisions, and tower technicians because we needed to know the data they could feasibly collect in the field.

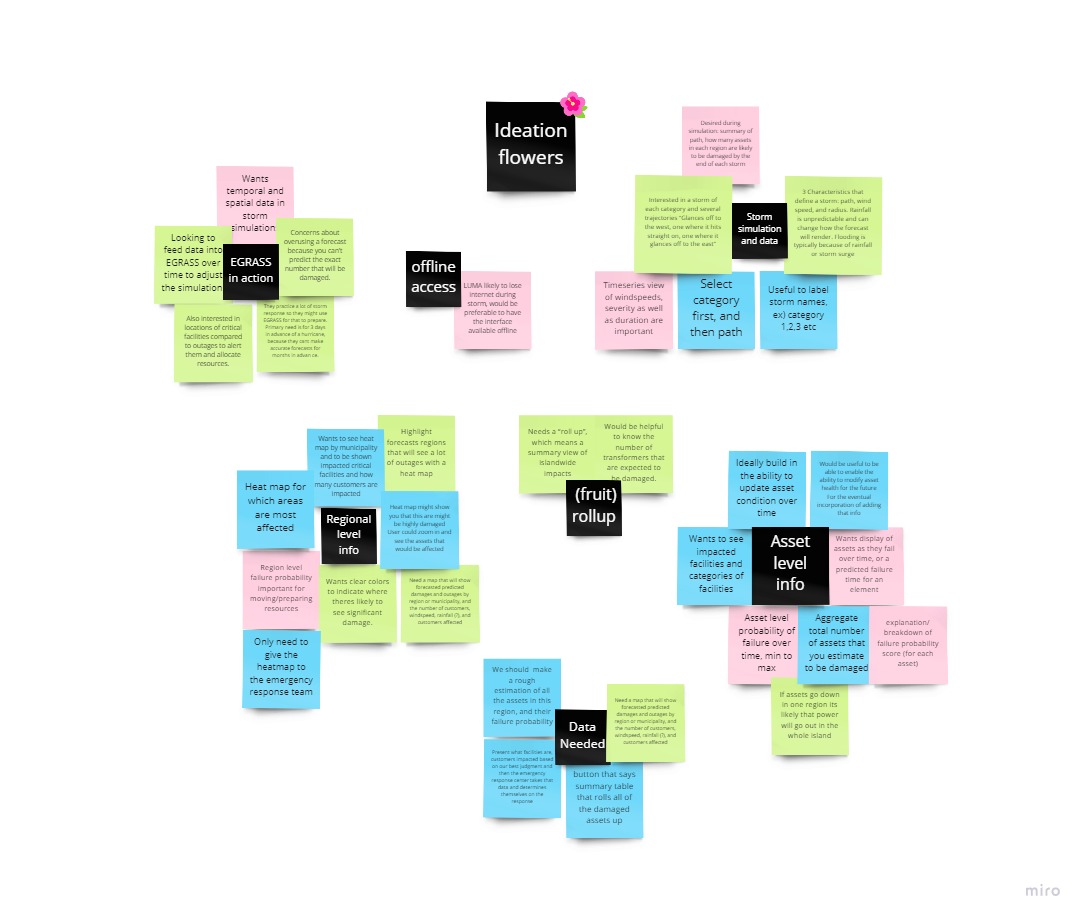

Following interview transcript coding and thematic analysis, we extracted three major themes.

Desired information granularity differs by user. Users with different tasks, positions, and goals require different levels of granularity in storm simulations.

Varied methods for checking asset status. Tower technicians use a variety of methods to test the safety and integrity of structures. Relevant tests depend greatly on the tower material, surroundings, and previous condition data.

Varied affordances required for storm response. Users who directly engage with the storm simulation described the importance of simulation editing and forecast summaries.

The EGRASS Risk Analysis Module needs to be display relevant, digestible, and shareable information.

Our user research illuminated several areas for improvement and new features needed by users.

- Asset details: a short description of tower condition and status

- Risk rating: a composite value representing a tower’s risk of failure

- Simulation editing: Edit storm simulations by selecting category, path, and duration

- Exportable data summary: generate quick simulation report ready for presentation

Field technicians were already testing towers for integrity, they just didn’t have a system to collect and funnel this data into EGRASS.

Field technicians always conduct safety tests on towers before beginning maintenance, but results are only reported if the tower is ruled too unsafe for work. A mobile app would allow tower technicians to efficiently log tower condition data without interfering with work activities or relying on special equipment. The varied condition and stress testing metrics lend themselves to a survey-style mobile interface.

- Asset condition scales: report asset condition with numeric scales

- Stress tests: provide instructions and metrics for tower stress tests

- Voice input: afford voice recordings for easy data entry

- Glove-friendly navigation: optimize navigation and interactions for gloved hands

- Offline capability: save data locally when out of service range

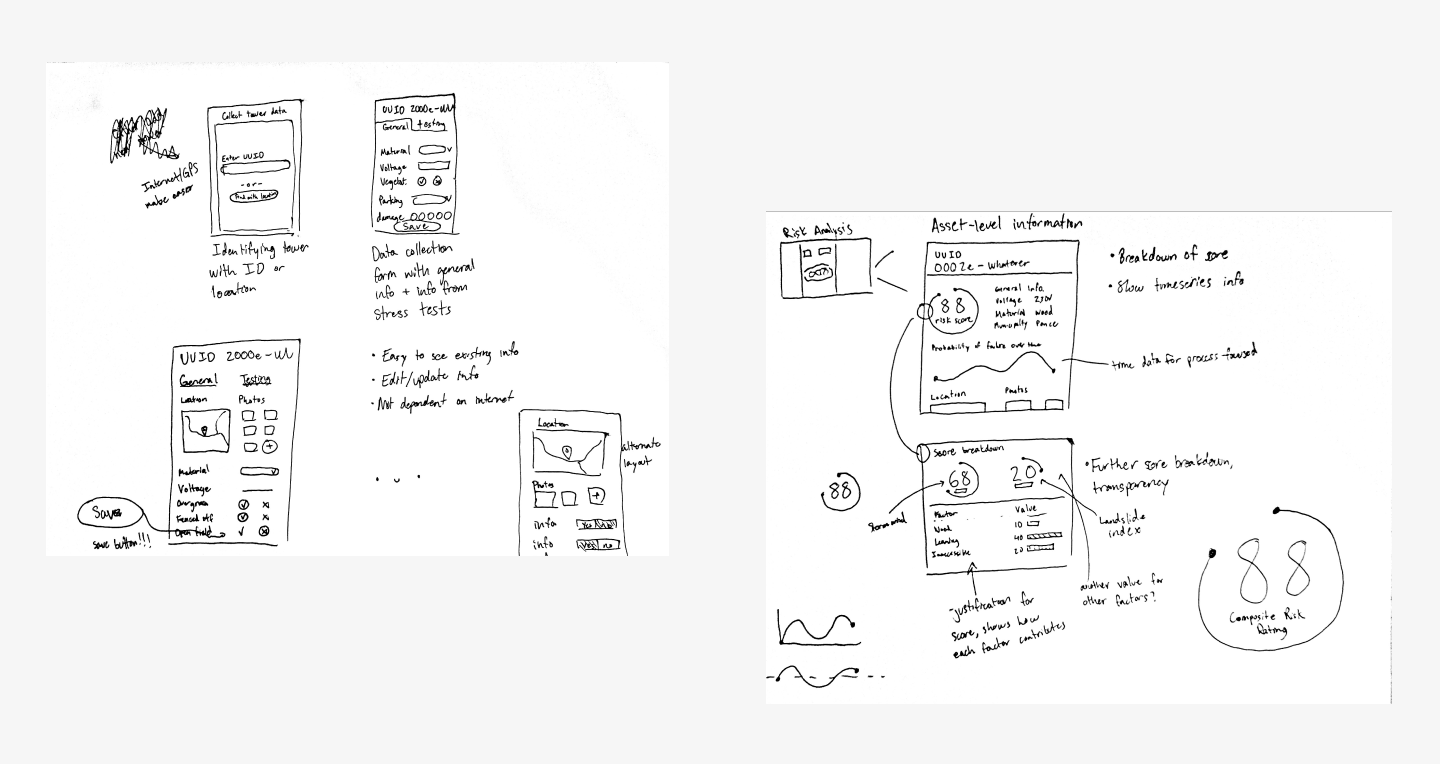

In the Design and Prototyping phase, we ideated solutions for the risk analysis module and data collection app. Our ideation sessions yielded a set of low fidelity wireframes.

To develop a breadth of ideas, the team engaged in a semi-structured ideation exercise.

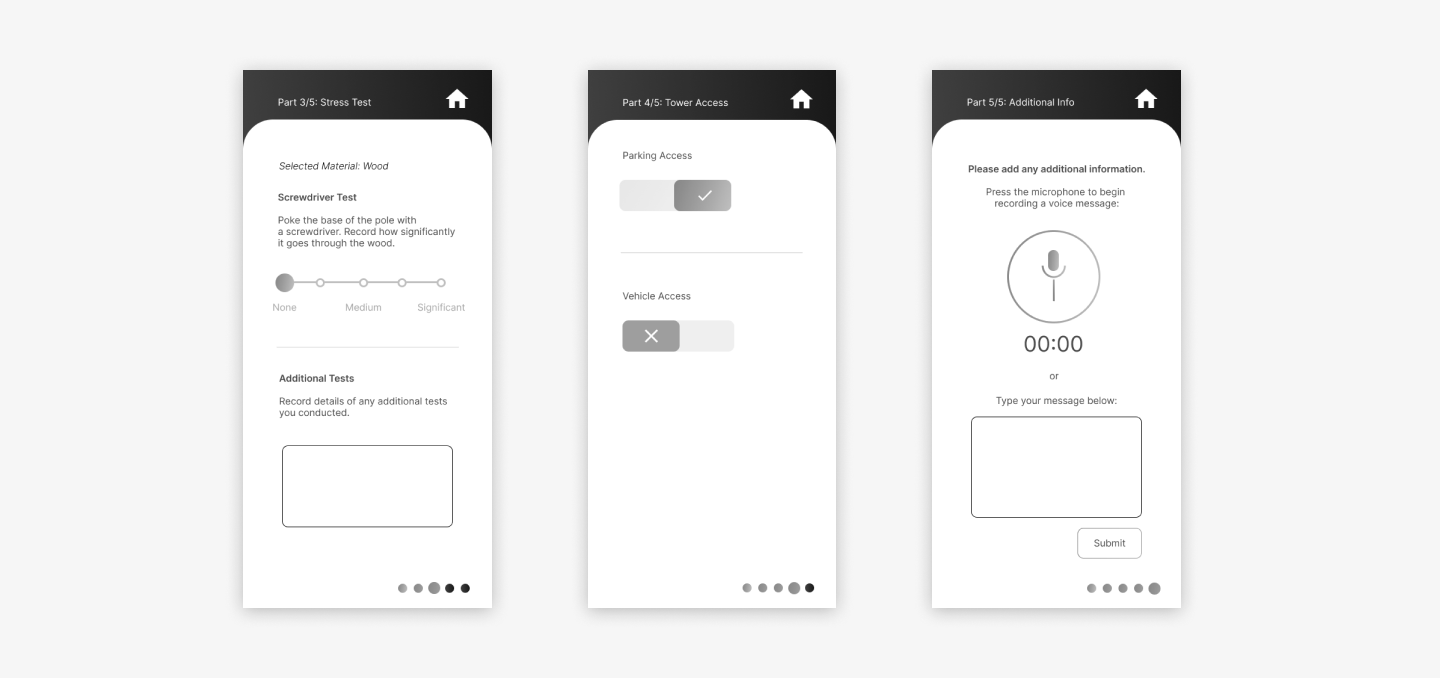

Our focus for the data collection app was efficiency-of-use, accessibility, and flexibility. From our interviews, we know tower technicians are often very active in the field and may not be able to peacefully fill out tower data, so our app should afford simple, noncontinuous data entry.

Based on our ideation session, We decided to structure the data collection app as a form-filling and storage center.

Each tower requires its own set of condition data, so our prototype expresses this as separate forms for individual towers. We used stress tests and other metrics suggested by participants in our interviews.

Our biggest challenge with these forms was ensuring the data collection process is easy as possible, as users are likely to be multitasking and wearing gloves. We made sure that data input buttons were large, tappable and swipe-able, and that any required text entries could be filled with voice-to-text.

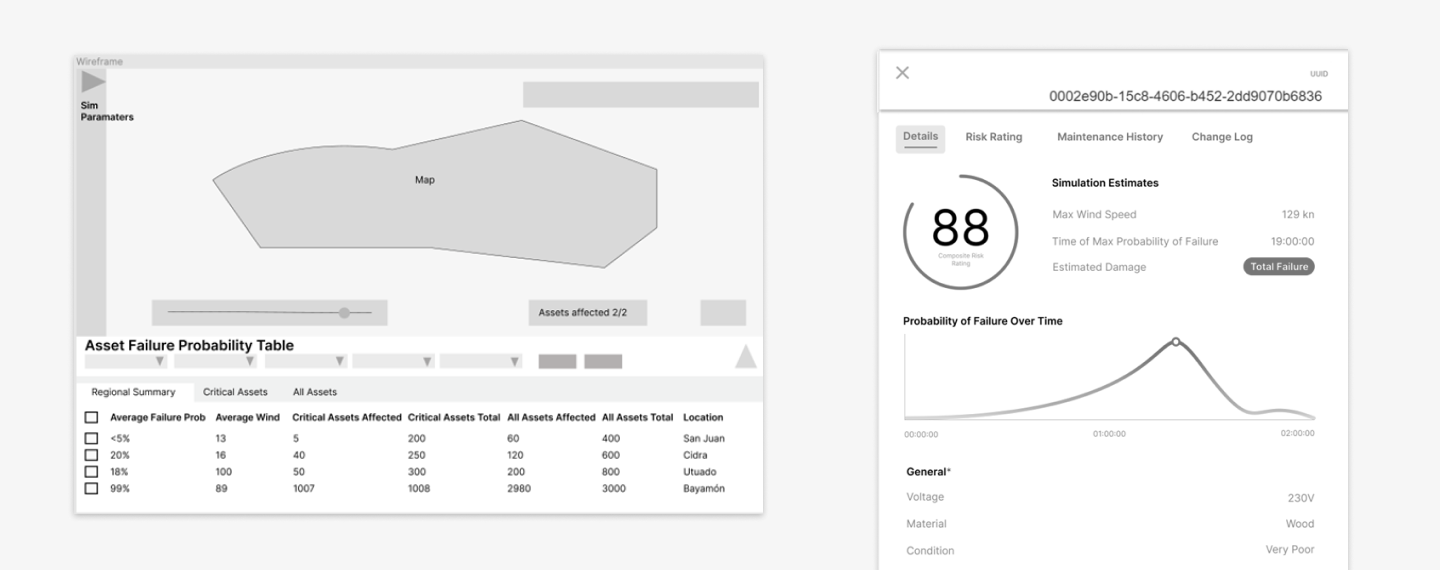

For the Risk analysis module, we expanded the asset information table to increase the visibility of asset and storm condition data for users.

Users of this module need to see how simulated storm conditions will affect individual towers, so it’s essential that corresponding data is clearly visually mapped. We also made the storm simulation edit panel more accessible so users can quickly change simulation conditions to match real-world storms as they develop.

I developed a risk rating breakdown screen so users can see exactly how an tower conditions contribute to a risk rating score. This feature allows for swift identification of towers in most need of repair, as well as how to allocate repair teams before potential hurricanes.

The PNNL software development and UX teams evaluated the feasibility of our wireframes.

We requested general feedback for areas of improvement with emphasis on the feasibility of our proposed features. Feedback for both the risk module and data collection app was very positive and all suggested features were considered feasible by developers.

The following feeback was implemented in the hi-fidelity prototypes:

- Define breakpoints for data tables and similar elements

- Ensure value edge cases are accounted for (0 and 100)

- Consider lengthy loading times with simulation data

- Increase granularity of slider metrics in the mobile app

With feedback from the UX and development teams, we iterated on the risk analysis module and data collection app and developed the final prototypes.

A mobile app fits data collection seamlessly into tower technician field work.

For the final iteration, we increased the granularity of data metrics and improved the accessibility of text input methods. Below is the mobile flow for logging condition data for an electrical tower.

The individual asset information interface helps officials predict regional electrical failure.

The asset information panel displays estimated damages on an individual asset as well as general asset information. A line graph shows the asset’s probability of failure over time, with the point at the top of the curve indicating the time of the maximum probability of failure. Users can hover anywhere over the line to see the data values for that point. Further below is general information about the asset, which remains similar to the previous EGRASS interface.

The Risk Rating Breakdown displays the factors contributing to an electrical tower’s risk of failure.

The risk rating section provides a detailed explanation of the composite risk rating, a previously ambiguous score. Below the breakdown summary is a more detailed explanation of score calculation metrics. Users modify individual values to see how that impacts the asset’s probability of failure in the simulation. When users put in the new value the scores above change to reflect that modification. We ensured that modified scores would not be confused with actual scores by including a “modified score” warning above changed values.